Originally posted by David (PassMark)

View Post

...yeah, kinda like it's "a bit painful" to optimize an application, compiler, compiler settings, libraries and all that for every bit of x86 kid on the market.

Let me ask a possibly-dumb question (I have a leg up on a lot of the people who will read this).

is it better to optimize new hardware to run old code, or to optimize new code to run on new hardware even if that means worse performance on old hardware?

Say you choose some of the first and some of the 2nd. What balance do you choose?

clearly that depends on the set of pluses and minuses of your new hardware and software vs those of your competitors.

Backwards-compatibility and future-headroom can be opposing forces.

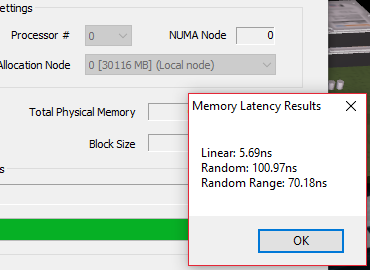

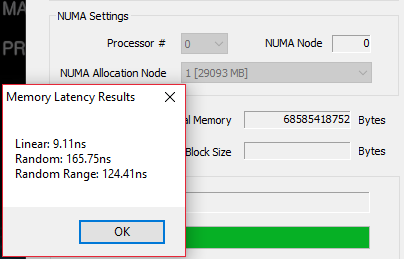

In the desktop /low-end workstation space where NUMA is not a factor, you don't worry about it.

In the server & possibly high-end workstation where NUMA could be a significant factor, you do worry about it.

And you find out just how significant it could be. And if that is still not significant enough, you don't worry about it.

So if 99% of application developers don't worry about it, should a benchmark be written to make it A Big Deal?

Or should hardware developers make it transparent to software?

It becomes a question of how much you want to take on in terms of an upgrade.

If code is optimized for a fraction of the market, and that fraction is cutting-edge from a 2nd-tier supplier,

what are the odds that it will be well shaken-out at the time of deployment?

I see a rash of firmware and driver upgrades in your future, young Jedi.

The funny thing about high-performance computing is that you find out that the most important things are stability and consistency.

You need more performance, you throw more hardware onto the grid. But you don't throw hardware onto the grid that isn't both reliable and fully compatible.

Because nothing bums a client out more than having their system lock-up when they begin to use it intensely.

The full feature-set of cutting edge hardware will never be used. Eventually that feature-set will be used when the hardware is no-longer cutting edge and some group of owners have taken one for the team. Even then, some of that feature set will have proven to be hopelessly buggy and un-fixable and some will have proven to be not worth the time and effort to optimize for. I guarantee that there will be a bunch of cheap boards out there that will never properly support the full feature set of AMD chips or even Intel chips just as there will be OEMs that find that the full feature set isn't properly supported by the hardware vendors. And so they will disable it, and not care who whines about it.. And if a developer builds their code to it, assuming that it is there, and it is not there, and their code crashes, then tough luck. Developers who want their code to run well on a wide variety of systems will turn off or at least tone down their use of advanced features until it is stable enough for them to ship.

So if you do somehow tweak your dev env so that it builds code that runs like a scalded cat on a Threadripper system?

Odds are that it will be because that Threadripper system runs Pentium II code very well.

cheers

Leave a comment: